Timeliness, fairness, and transparency in the examination evaluation process is a key parameter for student satisfaction in the overall learning experience – as examination results reflect how well was the subject matter absorbed by learners and the feedback provided by the teacher alongside grade is crucial for a student to improve know-how in the shortcoming areas.

Traditional marking method was plagued with a plethora of problems – human error involving missing questions inadvertently or skipping viewing papers stuck together by accident, mis-totaling, allotting marks higher than provisioned, the overhead of manual data entry on to online exam result portal, etc. Due to confidentiality and sensitivity involved the evaluators could neither work from home nor had the flexibility to choose a convenient time for 24 hours of the day.

This led to lengthier turn-around times/delays in result declaration and suboptimal feedback given in haste. Further, searching a particular script in the future for revaluation or referral by the student was too cumbersome.

To top it all there was no way for senior management to monitor the efficiency of evaluations in terms of time consumed, error rate, quality, etc by running analytics. An On-Screen Marking platform (OSM) addresses all the above inefficiencies conveniently through the uses of smart technology.

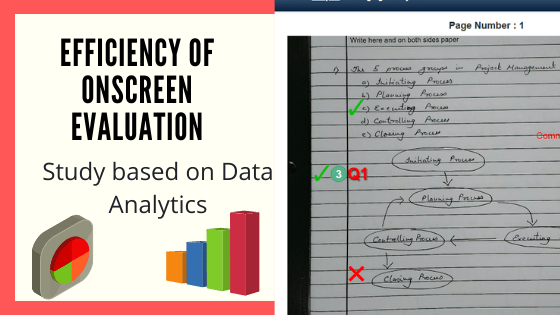

The present paper is a pilot study on one such platform Eklavvya– developed by Splashgain. A meticulous analysis is made on the enhancement of student learning and cost savings that may result from the deployment of OSM and its future in Artificial intelligence.

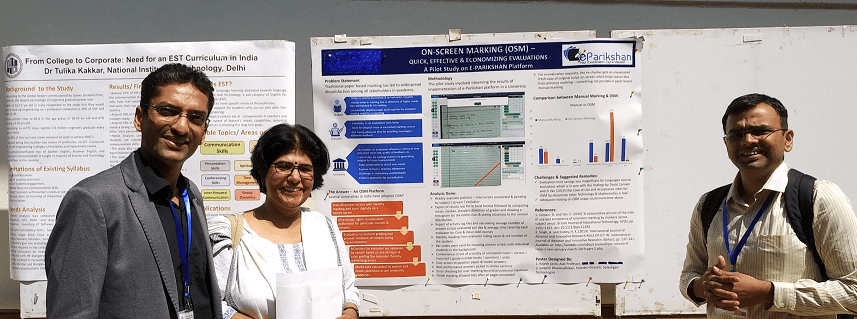

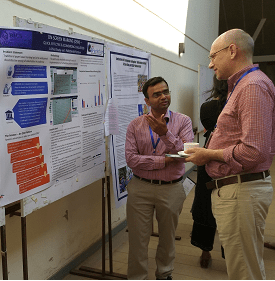

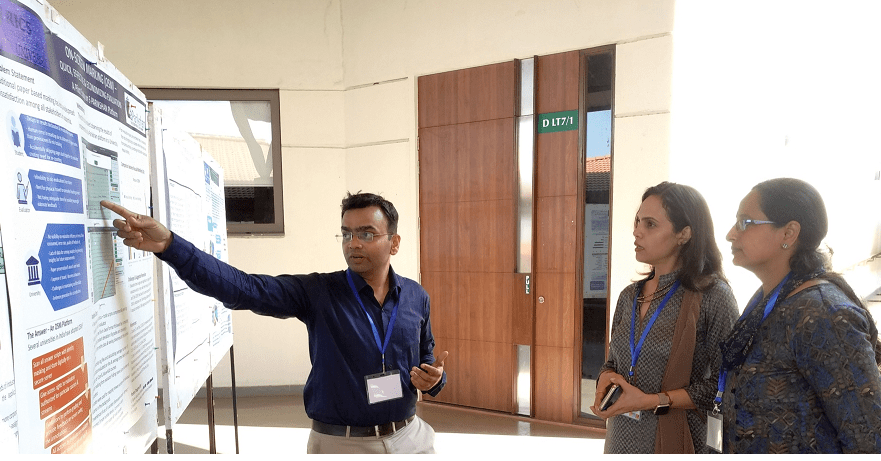

A Poster & a Talk were presented by Rajesh Joshi and Swapnil Dharmadhikari at a recently concluded International Conference hosted by BITS Pilani Goa Campus during 13-15 Feb ‘20 around the theme ‘Best Teaching Practices for Engaged Student Learning’. The event saw senior professors and institutional heads coming from prestigious colleges not only from within India–IITs, IIIT, BITS, but also from National University of Singapore & The University of Hong Kong.

The pilot project was aimed to highlight the role of technology in making evaluations digitally on a computer screen which is much more efficient than the archaic paper-based method. Declaration of results timely with high accuracy of marking is a huge challenge and OSM can be immensely helpful here.

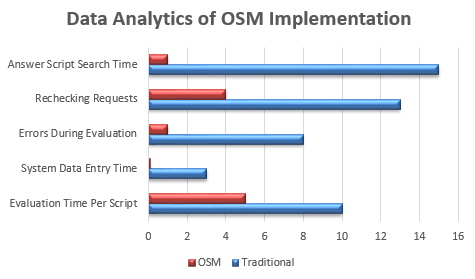

Millions of answer scripts have been evaluated using Onscreen Evaluation platform till date and this study was done on the data of a large well-known university. The study was done on the data after anonymizing names of institutes, evaluators & students. The results obtained are depicted in the below chart followed by interpretations:

Article Contents

1. Evaluation time per answer script reduced substantially

The traditional process of evaluation takes time for physical handling of the answer script, calculation of total marks based on examination pattern, evaluating or navigating all pages of the answer script.

Usage of technology has considerably reduced the overall time required for the answer script evaluation. Typically examiners take 8 to 9 minutes for evaluation of a single answer script.

Due to the usage of technology physical handling of the answer script is eliminated. Technology has enabled examiners to quickly navigate through answer scripts and add annotations in a quick time.

Blank pages of answer scripts can be easily identified and can be marked in a bulk manner. It reduces the time taken for the evaluation of the answer script.

Based on our data analysis, it has been observed that there has been a reduction of more than 50% in terms of evaluation time needed to evaluate a single answer script. The efficiency of the examiners has improved due to technology adoption.

2. System Data Entry time got completely reduced

Traditional answer scripts checking the involved the overhead task of data entry of each individual student marks in the system for result processing. Examiners needed to calculate the total, tally it based on exam pattern and make the entry for each of the answer scripts. It usually takes 2 to 4 minutes for each answer script data entry.

OSM technology eliminates the data entry activity altogether as the system is able to calculate the total marks and it can be exported to result in a processing system without any human intervention. Examiners need not have to do the data entry of each individual answer script. From an average 3 minutes time for answer script scoring data entry, it is reduced down to zero minutes of activity.

3. Error percentage lowered significantly

The manual evaluation process is error-prone as there are chances where examiners might skip some of the pages or commit an error while calculating the total marks for the particular student. The overall error percentage has come down drastically from 8% to just 1%.

Following types of Errors were reduced due to technology adoption –

Calculation of sum

Skipping of pages during the evaluation

Skipping of question during the evaluation

Skipping of supplement during the evaluation

Assigning more marks that max allotted marks for a particular question

4. Revaluation requests by students plummeted

Re-evaluation requests are directly proportional to a number of errors committed by the examiners during the evaluation phase. The traditional evaluation process is error-prone and results in 8 to 10% of the errors. Students typically send requests for rechecking or revaluation process.

Technology like onscreen marking has helped to reduce down errors during the evaluation phase as mentioned in point No 3. It resulted in a reduced number of re-checking requests by the students.

The number of rechecking requests has been reduced by more than 80%. Overall satisfaction associated with the evaluation process has improved.

5. Feedback length of examiners for midterm/tutorial exams improved

Digital answer script evaluation provided flexibility for the examiners to write feedback comments for a particular answer written by the student. The system provides facility to extract feedback comments of the examiner and it can be easily shared with the students in case of tutorial / midterm / practice exams. Typing feedback compared to writing using a pen is easy and it resulted in a better feedback mechanism for the evaluators and students.

6. Answer Script Search and Extraction time dropped

Physical handling of the answer script is time-consuming and extraction of particular answer scripts from the bunch of scripts is a nightmare task. Typically manual extraction of answer scripts from thousands of available answer scripts can take around 15 minutes.

The OSM technology ensures that digital copies of the answer script are available at any point in time within a single click of a button. The administration can easily do the search process and extract digital copies associated with the particular student/ subject.

Summary

The pilot study provided useful insights about technology adoption for the answer script evaluation process. Technology can play a key role in the evaluation process and can help improve accuracy and efficiency. It can certainly be aided by AI-driven evaluation in the future which can make a perfect combination of human and machine-driven evaluation of answer scripts.

[optin-monster-shortcode id=”voyw5dmsefdpkdzuvwwe”]

About Authors

Rajesh is an Assistant Professor at Amity University, Mumbai having over 18 years of collective rich experience in professional practice, teaching and research.

Swapnil has more than 15 years of experience in the Information Technology sector. He has been working on digital platforms in the education sector. Swapnil is an avid reader and writes articles on technology and leading developments in the education and IT sectors. Swapnil has an active podcast about Education Technology which has various discussions about trends in education technology adoption.

Swapnil is the founder of Splashgain, an Education Technology company from India working on various innovative EdTech platforms for examinations, proctoring solutions, digital admission process and more.

![How Government-Led Exams at 250+ Locations Are Setting New Standards of Integrity [Case Study]](https://www.eklavvya.com/blog/wp-content/uploads/2024/04/Enhancing-Exam-Integrity-Government-Certification-in-250-Locations-150x150.webp)

![Transforming Central Govt. Exams Evaluation: How Onscreen Marking is Leading the Charge [Case Study]](https://www.eklavvya.com/blog/wp-content/uploads/2024/04/How-Onscreen-Marking-Revolutionized-Central-Govt-Exams-Case-Study-1-150x150.webp)

![How Onscreen Marking Revolutionized Central Govt Exams [Case Study]](https://www.eklavvya.com/blog/wp-content/uploads/2024/04/How-Onscreen-Marking-Revolutionized-Central-Govt-Exams-Case-Study-1-300x300.webp)